Intel launches Private AI Collaborative Research Institute

09.02.2021“Intel, in collaboration with Avast and Borsetta, launched the Private AI Collaborative Research Institute to advance and develop technologies in privacy and trust for decentralized artificial intelligence (AI). The companies issued a call for research proposals earlier this year and selected the first nine research projects to be supported by the institute at eight universities worldwide.”

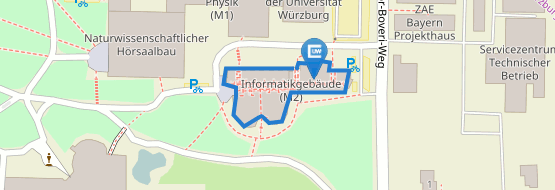

The Private AI Collaborative Research Institute selected the first nine institute-supported research projects, distributed among eight universities worldwide. The Univeristy of Würzburg is one of them.

The industry is trending toward intelligent edge systems. Algorithms such as neural networks and distributed ledgers gain traction at the edge on the device level without reliance on cloud infrastructure. To be effective, this requires vast amounts of data that is often sensed at the edge, such as vehicle routing, industrial monitoring, security threat monitoring, or search term predictions.

The Private AI Collaborative Research Institute will focus its efforts on overcoming five main challenges:

- Training data is decentralized in isolated silos and often inaccessible.

- Today’s solutions are insecure and require a single trusted data center.

- Centralized models become obsolete quickly.

- Centralized compute resources are costly and throttled by communication and latency.

- Federated machine learning (FL) is limited.

While FL can access data at the edge, it cannot reliably guarantee privacy and security. Here is where Prof. Dr. Dmitrienko, head of the research group Secure Software Systems at the University of Würzburg, will contribute towards designing a framework for Federated Learning (FL) resilient against security and privacy threats. The design will incorporate security mechanisms against various attack vectors such as data poisoning and model inference. It will focus on integration of hardware-assisted security and trusted execution environments of varying capabilities for achieving improved privacy and integrity guarantees.

More information at private-ai.org

People involved: Prof. A. Dmitrienko, Christoph Sendner, and Torsten Krauß.